Elon Musk Unleashes Legal Battle, Puts OpenAI's Safety Record Under Intense Scrutiny!

Elon Musk’s legal challenge against OpenAI centers on a critical question: how the company’s for-profit subsidiary impacts its foundational mission to ensure artificial general intelligence (AGI) benefits humanity. This pivotal argument was recently heard in a federal court in Oakland, California, where former employees and experts testified about a perceived shift away from AI safety towards product commercialization.

Rosie Campbell, who joined OpenAI’s AGI readiness team in 2021 and departed in 2024, testified about a significant organizational change. She noted that both her team and the Super Alignment team were disbanded around the same period. Campbell described OpenAI as initially being “very research-focused and common for people to talk about AGI and safety issues,” but observed a transformation into “more like a product-focused organization.” While acknowledging the necessity of substantial funding for building AGI, she emphasized that developing super-intelligent models without adequate safety measures would betray the original mission she had joined.

Campbell highlighted a specific incident involving Microsoft's deployment of a version of OpenAI’s GPT-4 model in India via its Bing search engine. This deployment occurred before the model had been evaluated by OpenAI’s internal Deployment Safety Board (DSB). Although she deemed the model itself not a “huge risk,” Campbell stressed the importance of establishing “strong precedents as the technology gets more powerful,” advocating for reliable safety processes. During cross-examination, she conceded that, in her “speculative opinion,” OpenAI’s safety approach surpasses that of xAI, Musk’s AI company, which was recently acquired by SpaceX. OpenAI publicly shares model evaluations and a safety framework, though it refrained from commenting on its current approach to AGI alignment, despite hiring Dylan Scandinaro from Anthropic as its new head of preparedness.

The deployment of GPT-4 in India was among several “red flags” that led OpenAI’s non-profit board to briefly oust CEO Sam Altman in 2023. This incident followed complaints from employees, including then-chief scientist Ilya Sutskever and then-CTO Mira Murati, concerning Altman’s conflict-averse management style. Tasha McCauley, a board member at the time, testified about her concerns regarding Altman’s lack of transparency, which hindered the board’s ability to effectively function within its unusual structure.

McCauley detailed instances where Altman allegedly misled the board. These included lying to another board member about McCauley’s intention to remove Helen Toner, a third board member who had published a white paper implying criticism of OpenAI’s safety policy. Furthermore, Altman failed to inform the board about the decision to publicly launch ChatGPT and did not disclose potential conflicts of interest. McCauley articulated the board's mandate: “We are a non-profit board and our mandate was to be able to oversee the for-profit underneath us.” She conveyed the board’s profound lack of confidence, stating, “We did not have a high degree of confidence at all to trust that the information being conveyed to us allowed us to make decisions in an informed way.”

However, the board’s decision to remove Altman coincided with a tender offer to company employees. When OpenAI staff rallied behind Altman and Microsoft intervened to restore the status quo, the board ultimately reversed its decision, with the dissenting members stepping down. This apparent failure of the non-profit board to influence the for-profit organization directly supports Musk’s lawsuit, which argues that OpenAI’s transformation from a research entity into a major private company violated the implicit agreement of its founders.

David Schizer, a former dean of Columbia Law School and an expert witness for Musk’s team, corroborated McCauley’s concerns. He emphasized, “OpenAI has emphasized that a key part of its mission is safety and they are going to prioritize safety over profits.” Schizer added that this commitment means “taking safety rules seriously, if something needs to be subject to safety review, it needs to happen. What matters is the process issue.”

With AI increasingly integrated into for-profit enterprises, the implications of OpenAI’s internal governance issues extend far beyond a single laboratory. McCauley argued that these failures should prompt greater government regulation of advanced AI, noting, “[if] it all comes down to one CEO making those decisions, and we have the public good at stake, that’s very suboptimal.”

You may also like...

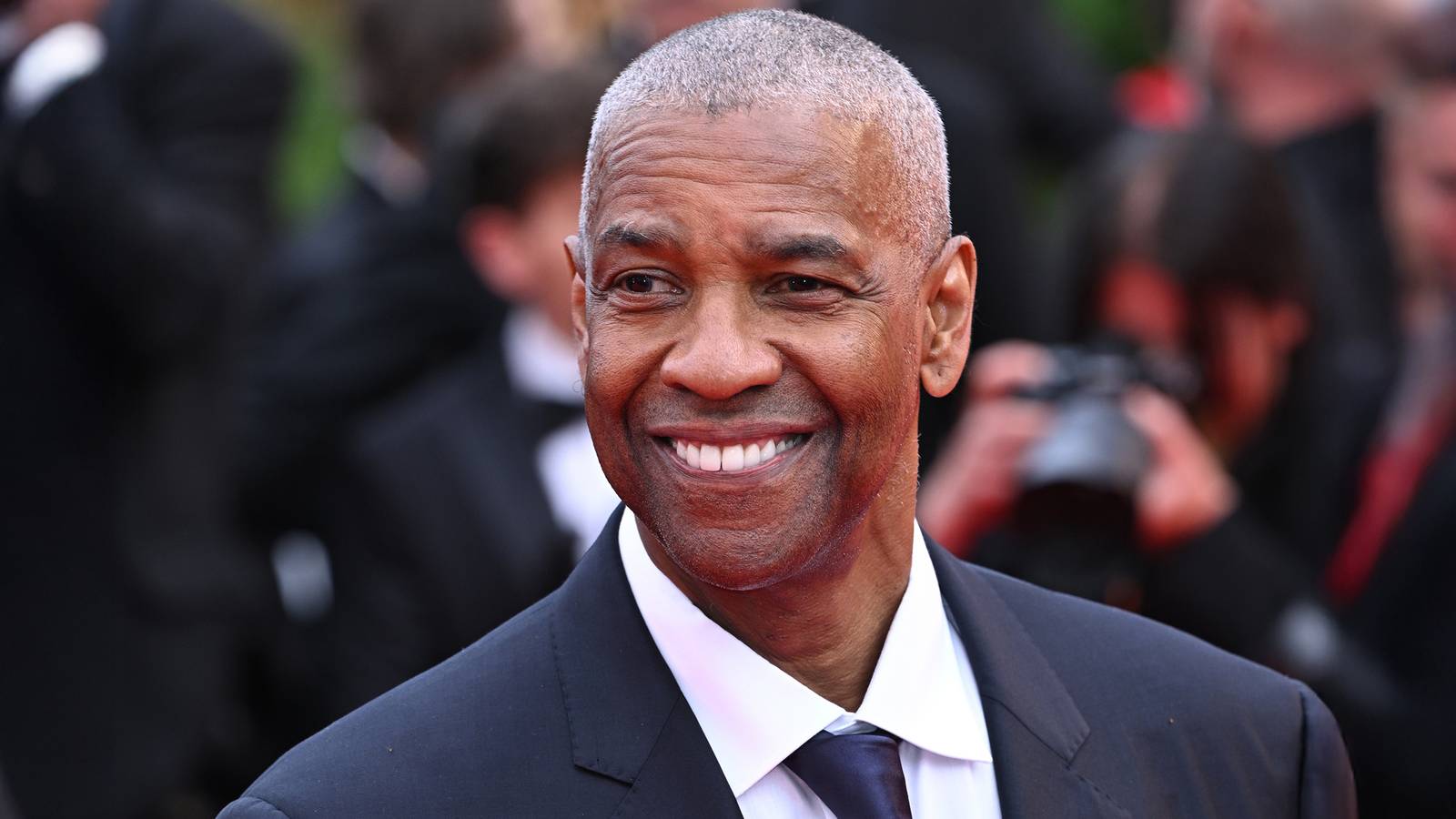

Denzel Washington's Iconic 'John Wick'-Style Franchise Dominates Streaming 12 Years Later!

Denzel Washington continues his prolific career with upcoming projects like Netflix's "Here Comes the Flood" and the str...

First Look: 'The Batman: Part 2' Reveals Batmobile and Robert Pattinson's Return!

The highly anticipated "The Batman: Part 2" has offered its first official glimpse, revealing Robert Pattinson's Batmobi...

Namibia's Tourism Model Faces Crisis: Competition Probe Sparks Industry Backlash

A regulatory investigation into exclusive tourism arrangements in northwestern Namibia is sparking a fierce debate, pote...

Central Africa Soars: Air Congo Launches Historic Kinshasa-Brussels Route

Air Congo is set to launch its first long-haul route connecting Kinshasa to Brussels, marking a significant expansion fo...

Elon Musk Unleashes Legal Battle, Puts OpenAI's Safety Record Under Intense Scrutiny!

Elon Musk's lawsuit against OpenAI highlights a critical conflict between the company's founding mission of AI safety an...

Chinese AI Powerhouse Moonshot Secures Staggering $2B Funding, Valued at $20B!

Chinese AI lab Moonshot AI has raised $2 billion at a $20 billion valuation, bringing its total funding in six months to...

Hantavirus Scare: Cruise Ship Approaches Europe Amid Spreading Rat Virus Fears

A hantavirus outbreak on the MV Hondius cruise ship has led to multiple cases and three deaths, with the Andes virus str...

Democracy Under Threat: CSOs Seek Court Intervention to Halt Rapid Passage of 70+ Controversial Bills in Parliament

Opposition political party vice president Sean Tembo and two Civil Society Organisations have strongly condemned the Zam...