Pentagon Labels AI Innovator Anthropic an 'Immediate Supply Chain Risk'

The Trump administration has taken the unusual step of designating artificial intelligence company Anthropic as a supply chain risk, a move that could force government contractors to discontinue using the firm’s AI chatbot, Claude AI chatbot.

The Pentagon formally notified the company that the designation would take effect immediately, effectively ending negotiations between the government and the San Francisco–based AI developer.

The decision followed accusations from Donald Trump and Defense Secretary Pete Hegseth that the company’s restrictions on how its technology could be used posed a potential threat to national security.

The standoff intensified after Anthropic CEO Dario Amodei refused to remove safeguards designed to prevent the system from being used for mass domestic surveillance or fully autonomous weapons programs.

In its statement, the Pentagon argued that the military must retain the authority to use technology for all lawful purposes and warned that it would not allow private vendors to limit how critical tools are deployed in defense operations.

Officials said allowing such restrictions could interfere with military decision-making and potentially endanger warfighters.

Under U.S. procurement rules, labeling a company a supply chain risk can restrict the use of its products in military contracts and compel defense contractors to seek alternative providers.

The designation is particularly significant because the rule is typically used against foreign adversaries suspected of tampering with technology supply chains, not against domestic firms.

Anthropic has rejected the designation and announced plans to challenge it in court, arguing that the government’s action is legally unsound.

Amodei clarified that the company’s proposed safeguards applied only to high-level use cases, specifically prohibiting mass surveillance of Americans and fully autonomous weapons systems, while not interfering with operational military decisions.

He also noted that the designation appears to apply only to the use of Claude within direct Pentagon contracts, meaning most commercial and civilian customers remain unaffected.

The dispute has sparked wider debate across the technology sector, with critics warning that the move could discourage other AI firms from working with the U.S. government while deepening tensions between Silicon Valley and the defense establishment

You may also like...

Knicks Shock Spurs in Game 1 Thriller, Fans Banned for Wild Finals Opener!

The New York Knicks stole Game 1 of the 2026 NBA Finals, defeating the San Antonio Spurs 105-95 to take an early 1-0 ser...

Netflix Sensation: Alan Ritchson's Sci-Fi Thriller Dominates Viewing Charts!

Netflix's latest viewership data highlights the success of mid-budget films, with the sci-fi action movie 'War Machine' ...

East Meets West: Kenshi Yonezu, BTS, & Mrs. GREEN APPLE Dominate Billboard Japan Charts!

Kenshi Yonezu's "IRIS OUT" dominates Billboard Japan's 2026 mid-year charts, topping the Japan Hot 100 and Global Japan ...

Unveiled: Drake’s Engineer Spills Secrets on Original ‘ICEMAN’ & Unreleased Tracks!

Drake's recent album trio, including ICEMAN, HABIBTI, and MAID OF HONOUR, has dominated the music scene, with ICEMAN top...

Global Stage: Rema, Davido, Burna Boy & Ayra Starr Represent Nigeria on FIFA World Cup 2026 Album!

The Official FIFA World Cup 2026 Album, set for release on June 5, 2026, features four Nigerian artists: Burna Boy, Rema...

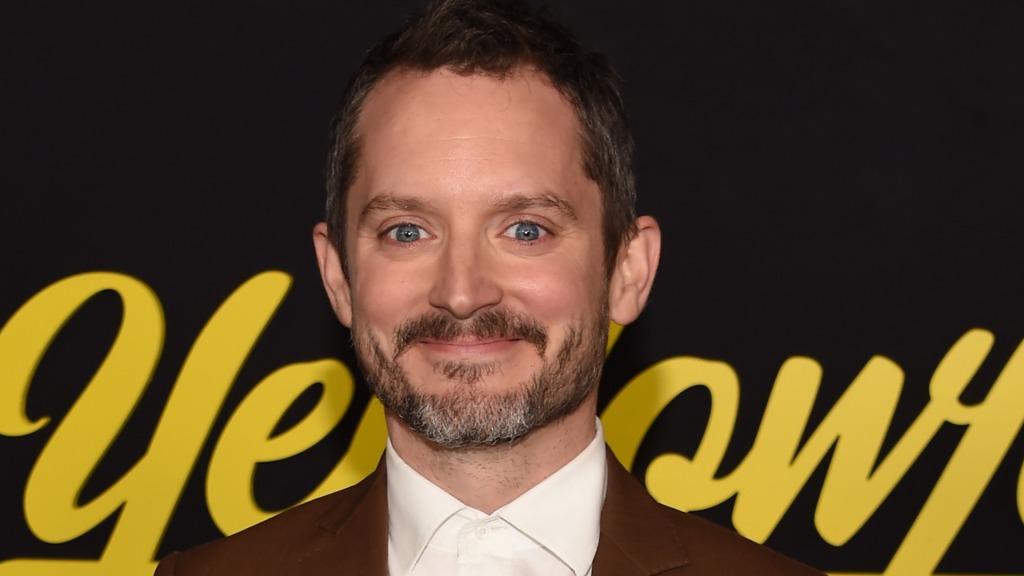

Elijah Wood Unleashes Fury Over Alamo Drafthouse's Broken Mobile Ordering System

Alamo Drafthouse Cinemas has unexpectedly shifted its long-standing no-phone policy, now encouraging smartphone use for ...

Bitcoin's Brutal Plunge: Market Reels as BTC Dips Below $62K, Sparks FTX Crash Echoes

Bitcoin's price recently dropped into "Fire Sale!" territory on the Bitcoin Rainbow Chart, a level last seen during the ...

Is $60K the Bottom? Schwab Strategist Hints Bitcoin's Mining Cost Signals Cycle Low

Jim Ferraioli of Charles Schwab argues that Bitcoin's bear market has a structural cost floor around $60,000, rooted in ...