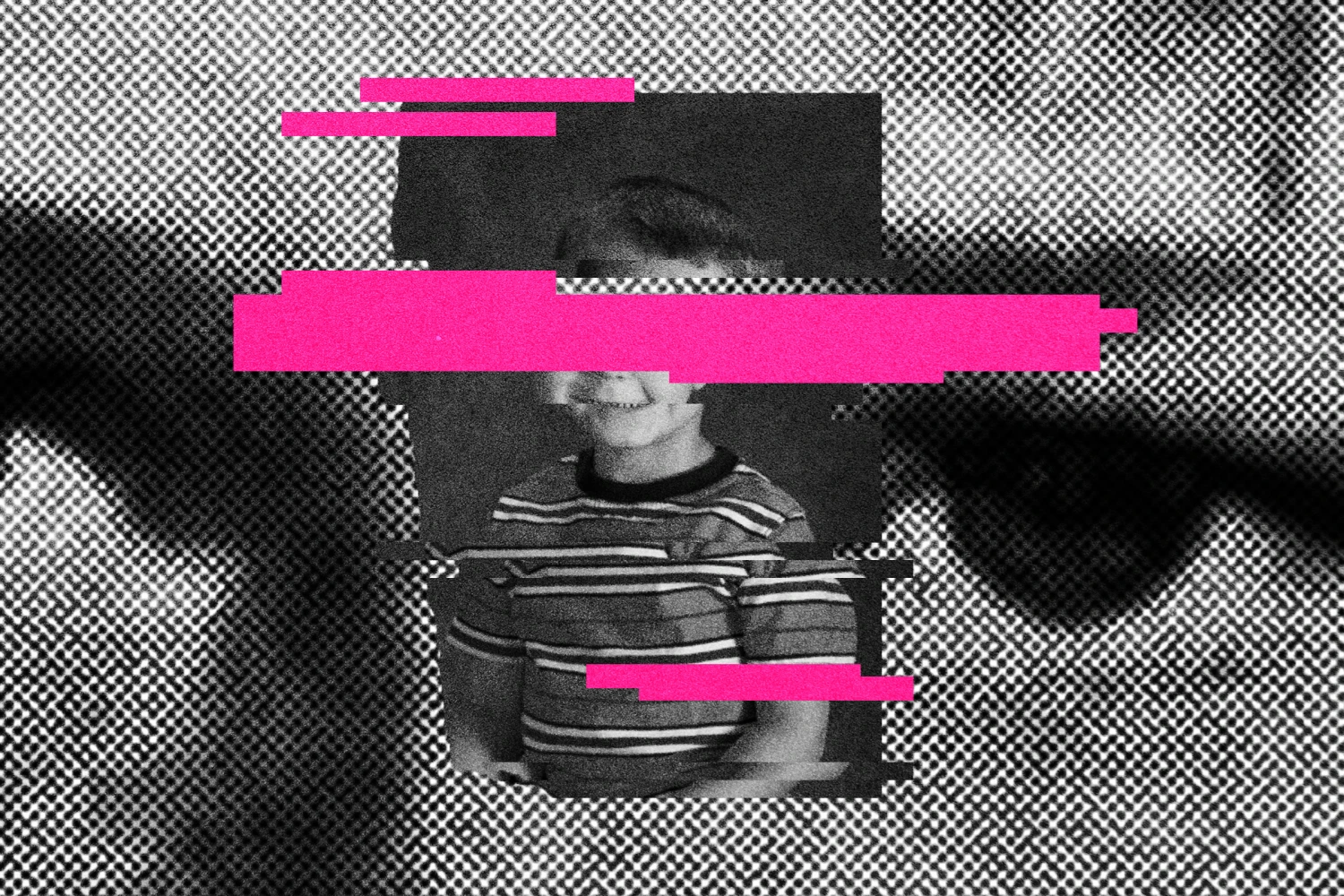

OpenAI Unleashes Crucial Safety Blueprint to Combat Child Exploitation Crisis

OpenAI has unveiled a comprehensive Child Safety Blueprintdesigned to bolster U.S. child protection efforts in response to the rapid advancements and associated risks of artificial intelligence.

Released on Tuesday, this blueprint aims to facilitate faster detection, improve reporting mechanisms, and enhance the efficiency of investigations into cases involving AI-enabled child exploitation.

The overarching goal of the Child Safety Blueprint is to combat the alarming surge in child sexual exploitation that has been linked to the evolution of AI technologies.

The Internet Watch Foundation (IWF) reporteda stark increase, with more than 8,000 instances of AI-generated child sexual abuse content detected in the first half of 2025 alone, representing a 14% rise from the preceding year.

This includes the nefarious use of AI tools by criminals to create fabricated explicit images of children for financial sextortion schemes and to generate highly convincing messages for grooming vulnerable individuals.

This initiative from OpenAI comes at a time of increased examination from various groups, including policymakers, educators, and child-safety advocates.

This heightened scrutiny has been particularly amplified by tragic incidents, such as cases where young individuals allegedly died by suicide following interactions with AI chatbots.

Notably, in November, the Social Media Victims Law Center and the Tech Justice Law Project filed seven lawsuitsin California state courts.

These lawsuits contend that OpenAI prematurely released its GPT-4o product, asserting that its psychologically manipulative nature played a role in wrongful deaths by suicide and assisted suicide.

They specifically cited four individuals who committed suicide and three others who developed severe, life-threatening delusions after prolonged engagement with the chatbot.

The Child Safety Blueprint was developed through a collaborative effort, involving key partners such as the National Center for Missing and Exploited Children (NCMEC) and the Attorney General Alliance, and benefiting from feedback provided by North Carolina Attorney General Jeff Jackson and Utah Attorney General Derek Brown.

OpenAI states that the blueprint is structured around three core aspects: updating existing legislation to encompass AI-generated abusive material, refining reporting procedures to law enforcement agencies, and integrating preventative safeguards directly into its AI systems.

By focusing on these pillars, OpenAI aims not only for earlier identification of potential threats but also to ensure that actionable intelligence is promptly delivered to investigators.

This new child safety blueprint is built upon and extends OpenAI's previous initiatives. These include updated guidelines for interactions with users under the age of 18, which explicitly prohibit the generation of inappropriate content, discouraging self-harm, and advising against actions that would enable young people to conceal unsafe behavior from their caregivers.

The company has also recently introduced a similar safety blueprint specifically tailored for teens in India, demonstrating a broader commitment to child safety across its global operations.

Recommended Articles

North Legon Child Abuse Horror: Father Arrested, Victim Recovers Amidst Intervention

A horrific child abuse incident in North Legon, Accra, where a 16-year-old boy was allegedly dragged by a motorbike by h...

Meta Shocks Users: Instagram's End-to-End Encryption Faces Discontinuation

Meta Inc. is set to discontinue end-to-end encryption for Instagram direct chats after May 8, 2026, urging users to save...

OpenAI Trial's Stark Revelation: The Battle for AI's Soul Between Profit and Purpose

A recent trial between Elon Musk and OpenAI CEO Sam Altman highlighted the astronomical costs of AI development, reveali...

Trump Pulls Plug on AI Executive Order: US Tech Edge Concerns Mount!

President Donald Trump abruptly canceled a new executive order on artificial intelligence, citing concerns it could unde...

OpenAI Civil War: Musk Accuses Altman of Theft, But Court Reveals Shared Vision

A recent jury decision rejected Elon Musk's lawsuit against OpenAI and Microsoft, citing the case's inherent weaknesses ...

You may also like...

Springbok Sensation Kolbe Set for Blockbuster Return: Stormers Seal Shocking Heist

Cheslin Kolbe is returning to the Stormers in Cape Town by July 1, a move made possible through a complex multi-party de...

Horror in Kruger: Murdered Tourists Found, Park Officials React to Brutal Double Killing

A police manhunt is underway after two tourists were found murdered with stab wounds in Kruger National Park's remote Cr...

Tinubu's 2027 Vision Unveiled: APC Endorses Presidential Candidate After Decisive Primary Victory

President Bola Tinubu secured an overwhelming victory in the APC presidential primary elections for the 2027 general ele...

Rivers State Erupts: ADC Governorship Primary Embroiled in Chaos and Imposition Claims Against Amaechi

The ADC gubernatorial primary in Rivers State has sparked significant controversy, with Dr. Gabriel Pidomson declared th...

Sensational Season: Fernandes Crowned Premier League's Top Player

Manchester United captain Bruno Fernandes has been named Premier League Player of the Season and Football Writers’ Assoc...

Anime Crowns Its Champion: 'My Hero Academia Final Season' Dominates Crunchyroll Awards

The 10th Crunchyroll Anime Awards, held in Tokyo, celebrated the best in anime with "My Hero Academia Final Season" crow...

Cannes Shockwave: Jury President Park Chan-wook's Bold Claim Rocks Film Festival

The 79th Cannes Film Festival concluded with Cristian Mungiu winning the Palme d’Or for "Fjord," making him a two-time l...

Critical Delays Loom: Kenya Airways Warns Against Maintenance Bill

Kenya Airways has raised significant concerns with Parliament over the proposed Strategic Goods Control Bill, 2026, fear...