Anthropic Defies Pentagon, Stands Firm on AI Safeguards Amidst Looming Deadline

A public dispute has erupted between the Pentagon and AI company Anthropic over ethical parameters for military use of the AI chatbot Claude. Defense Secretary Pete Hegseth issued an ultimatum to Anthropic CEO Dario Amodei, demanding unrestricted AI use by Friday, threatening severe business repercussions.

Amodei, however, stated Anthropic "cannot in good conscience accede," setting a firm ethical boundary just 24 hours before the deadline.

Anthropic, a rapidly growing AI startup, requested guarantees that Claude would not be used for mass surveillance of Americans or fully autonomous weapons. The company criticized the new contract language as containing legal loopholes that would allow safeguards to be bypassed.

The Pentagon, through spokesman Sean Parnell, insisted that it "will not let ANY company dictate operational decisions," while Undersecretary for Research and Engineering Emil Michael accused Amodei of a "God-complex" and alleged he was endangering national security.

The military warned Anthropic of potential consequences, including contract cancellation and designation as a "supply chain risk," which could impact critical partnerships. Officials also cited the possibility of invoking the Defense Production Act, which could compel Anthropic’s cooperation.

Support for Amodei’s ethical stance has emerged from across Silicon Valley, with workers from OpenAI and Google signing an open letter opposing the Pentagon’s demands.

The letter also alleged that the military is negotiating similar unrestricted access with other AI companies, aiming to divide the industry.

Former Air Force Gen. Jack Shanahan, previously leading Project Maven, praised Anthropic’s red lines as "reasonable," noting Claude’s existing use in classified government settings.

Shanahan warned that AI large language models are “not ready for prime time in national security settings,” especially regarding autonomous weapons.

Despite Pentagon assurances that AI will not be used for mass surveillance or fully autonomous weapons, specifics remain unclear. Amodei emphasized that if no agreement is reached, Anthropic will ensure a smooth transition to an alternative provider, maintaining both ethical and operational integrity.

You may also like...

Thunder's Playoff Nightmare: Key Players Struggle as Spurs Force Game 7 Showdown!

The San Antonio Spurs forced a decisive Game 7 in the Western Conference finals by dominating the Oklahoma City Thunder ...

Arsenal's Ultimate Test: UCL Final Pressure Mounts as Club Legends Debate Team Choices and Legacy

Arsenal faces holders Paris Saint-Germain in the Champions League final on May 30, a match critical for establishing the...

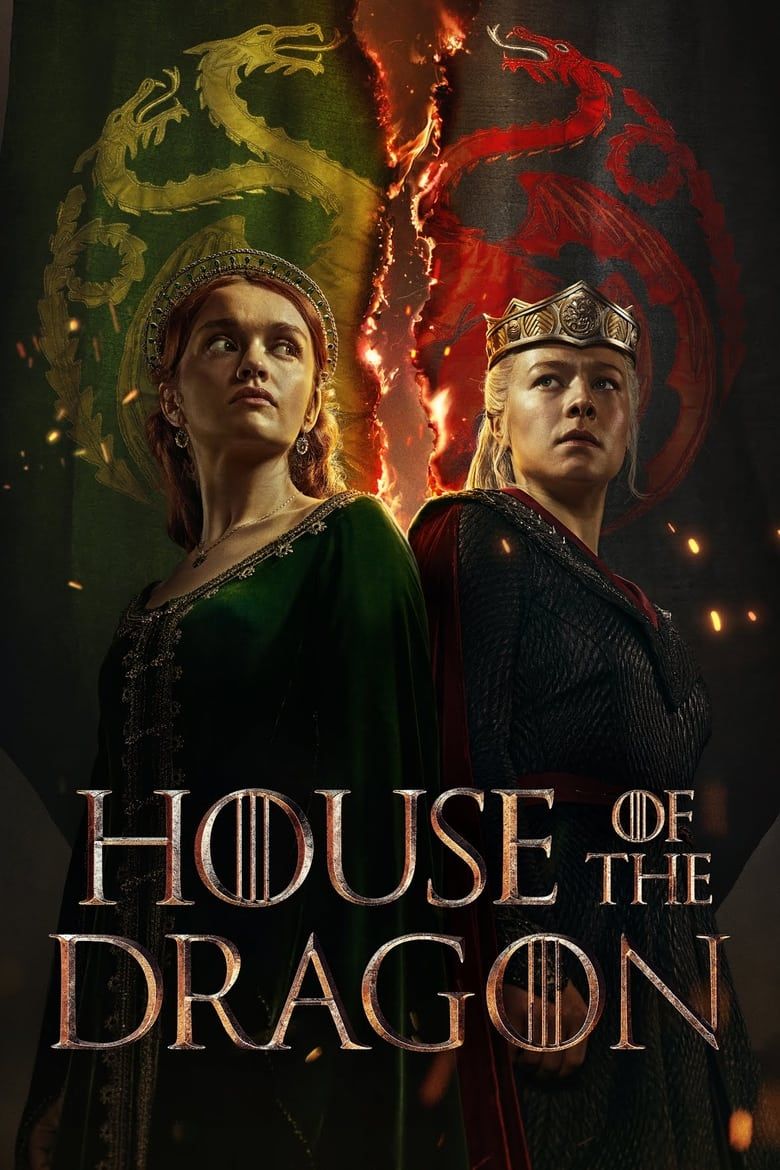

Fire & Blood Returns! ‘Game of Thrones’ Prequel Unleashes Explosive Season 3 Trailer

House of the Dragon Season 3 is set to ignite the full-scale Dance of the Dragons, escalating the conflict between Rhaen...

Horror Hit: ‘Backrooms’ Shatters A24 Records with $10.4 Million Previews!

A24's new horror movie "Backrooms," based on Kane Parsons' YouTube series, is set to dominate the box office, making $10...

Bret Michaels Withdraws From State Fair, Denies Political Motives!

Bret Michaels has pulled out of the Great American State Fair, citing increasing divisiveness and unfounded safety threa...

Maisie Peters' 'Florescence' Dominates ARIA Charts!

Maisie Peters claims her first No. 1 on the ARIA Albums Chart with "Florescence," while Olivia Rodrigo earns her fifth c...

Unveiled: The Hidden Formula Behind Prime Video's Latest Thriller Phenomenon!

Karen Rodriguez discusses her impactful roles in "The Hunting Wives" and "Spider-Noir," where she stars opposite Nicolas...

Shocking Twist: 'One Piece' Character Undergoes Unprecedented Transformation!

Mikaela Hoover is having a standout year, notably bringing Tony Tony Chopper to life in Netflix's live-action <em>One Pi...