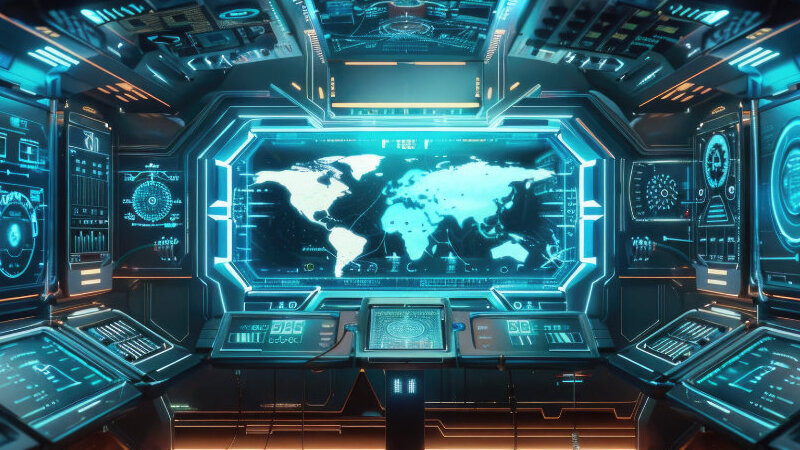

AI's Next Battlefield: Will Future Wars Depend on Algorithms?

On February 28, 2026, the United States and Israel launched a coordinated strike campaign against Iran codenamed Operation Epic Fury, the largest concentration of military power in nearly three decades.

It was a defining moment for modern warfare, not just for its scale, but for what was reportedly running behind the scenes: an artificial intelligence model helping soldiers identify targets, assess intelligence, and simulate battlefield outcomes in real time.

That AI was Claude, made by a company called Anthropic. The same Claude that, just hours earlier, the U.S. government had officially banned.

That contradiction of banning a tool and using it in the same breath, on the same day, in an active war, is more than an irony.

It is a window into exactly how deeply AI has already embedded itself into the machinery of modern conflict.

It Already Happened

According to reports from Reuters citing unnamed sources, U.S. Central Command used Claude during Operation Epic Fury for intelligence assessment, target identification, and battlefield simulations.

This came just nineteen hours after Defense Secretary, Pete Hegseth designated Anthropic a "supply chain risk to national security" and ordered all federal agencies to halt use of its systems, a designation that itself followed days of escalating tension over the Pentagon's demand for unrestricted access to the AI, and Anthropic's refusal to remove its ethical restrictions.

Let that sink in. A government declared a technology company a national security threat, then used that company's technology to help conduct airstrikes.

For its part, Anthropic stated it supports "all lawful uses of AI for national security," while continuing to challenge the supply-chain designation in court.

The Pentagon, meanwhile, announced it would work with Anthropic for up to six more months during a phase-out period, a timeline that conveniently covers much of mid-2026.

The AI Arsenal

What happened over Iran didn't come out of nowhere. AI integration in U.S. military operations has been building for years.

In July 2025, the Department of Defense awarded contracts worth up to $200 million for AI services with access to tools from Anthropic, OpenAI, Google, and Elon Musk's xAI.

They were usage contracts embedded into classified networks, giving warfighters direct access to frontier AI models.

Israel has been on a parallel track. Systems known as "Habsora" and "Lavender," widely reported on during the fighting in Gaza, process large volumes of data to generate and prioritize target banks at a pace no human analyst could match.

A key distinction is that Israeli systems are largely developed internally by military technology units, while the U.S. has leaned heavily on private commercial firms, a dynamic that creates friction, as the Anthropic episode made plain.

The AI arms race is not just American or Israeli. Iran has integrated AI into its own arsenal including AI-augmented unmanned vehicles.

During the broader 2025 Israel-Iran exchanges, AI-generated deepfakes and propaganda videos spread disinformation in real time, complicating an already volatile information environment.

What AI Actually Does in a War Zone

For most people, when they hear "AI in warfare," they have images of autonomous killer robots. The reality today is more procedural and in some ways, more unsettling for being so mundane.

AI systems currently process satellite and sensor data faster than any human team can. They flag anomalies, cross-reference intelligence databases, and surface patterns that analysts might miss over days of review, in seconds.

They run battle simulations, showing scenarios before a mission launches.

They support cyber operations, both offensive and defensive. And increasingly, they are used to generate and spread or counter disinformation.

The key line that military officials consistently draw is that AI is informing the humans who decide, not deciding itself. That distinction matters legally and ethically.

But it is a thin and narrowing line. When an AI system generates a target list and a human approves it under time pressure, how meaningful is that human judgment, really?

The Ethical Minefield

These are not hypothetical questions. When an AI-assisted strike results in civilian casualties, who is accountable? The algorithm? The company that built it? The soldier who pressed the button? The commander who signed off?

Current international law was not written with autonomous or semi-autonomous targeting in mind.

The Anthropic resistance suggests a deeper tension. AI companies building safety constraints into their models are, in effect, making ethical choices about how their technology can be used in war, choices that governments argue belong to them alone.

Defense Secretary Hegseth called Anthropic's refusal "arrogance and betrayal." Anthropic argued its restrictions are not optional features but fundamental safety commitments.

Both positions reflect genuine, reasonable concerns. Neither fully resolves the question.

Meanwhile, the speed at which AI systems operate outpaces the ability of any chain of command to verify outputs in real time is that gap where mistakes and atrocities are made.

An Arms Race Nobody Voted For

Every major power is now racing to field AI-enabled military capabilities faster than its adversaries.

Smaller states and non-state actors are not far behind. AI lowers the barrier to entry for sophisticated operations, tools once available only to well-resourced militaries are increasingly accessible across the board.

There is no global treaty governing AI in warfare.

There is no agreed upon red line for autonomous weapons. There is no international body with the mandate or the authority to enforce one.

The technology is moving faster than the diplomacy and the ethics, and as February 28 demonstrated, faster than governments' own rules about it.

A Question Worth Sitting With

The story of AI in the Iran strikes isn't really about one AI company, or one executive order, or one operation. It is about the moment humanity crossed into a new era of warfare and kept going, barely pausing to look back.

AI in war is not coming. It is here.

The question is not whether algorithms will shape future conflicts, they already are.

The question is whether the institutions meant to govern armed conflict can move fast enough to keep pace with the technology reshaping it.

If a government cannot enforce its own AI rules for a single day, in peacetime, what happens when the next war starts, and the next, and the one after that?

That question doesn't have a comfortable answer. But it is the one we need to be asking.

More Articles from this Publisher

Africa's Dark Files: The Kasai Murders and the Truth Someone Wanted Buried

Two UN investigators went to document war crimes in the DRC's Kasai region. They were murdered. Nearly a decade later, t...

Ojude Oba Festival 2026: Should It Have Been Cancelled After the Oyo School Kidnappings?

Ojude Oba 2026 continued as 46 kidnapped Nigerians remained in the forest. Here's why cancellation was never simple and ...

A Continent on Credit: The Ten-Year Story Behind Africa's Growing Debt Burden

Africa’s debt crisis explained through a decade-long analysis (2015–2026), covering rising debt-to-GDP ratios, Eurobond ...

The Game of Money UK Chesterfield 2026: The Wealth Conversations the African Diaspora Has Been Missing

The Game of Money UK Chesterfield 2026 brought African diaspora professionals together for honest conversations on wealt...

The Game of Money Is Coming to Chesterfield — Here's Why You Should Be in That Room

The Game of Money Conference is coming to Chesterfield on May 16, 2026, bringing practical conversations on wealth creat...

Made in Nigeria Cars: 5 Local Automobile Brands Most People Have Never Heard Of

From Innoson's Nnewi factory to Nord's EVs, Nigeria has a car manufacturing industry most people don't know exists. Meet...

You may also like...

Canadian Basketball Shake-Up: SGA Confirmed, Murray Sidelined for National Team!

The Canadian men's basketball team has announced its initial roster for the 2027 FIBA World Cup and 2028 Summer Olympics...

Curry's Global Leap: NBA Superstar Inks Groundbreaking Deal with Li-Ning!

Stephen Curry has inked a massive 10-year endorsement deal with Chinese company Li-Ning, ending his sneaker free agency ...

Olyphant's R-Rated Netflix Hit: New Series Dominating the Global Streaming Scene!

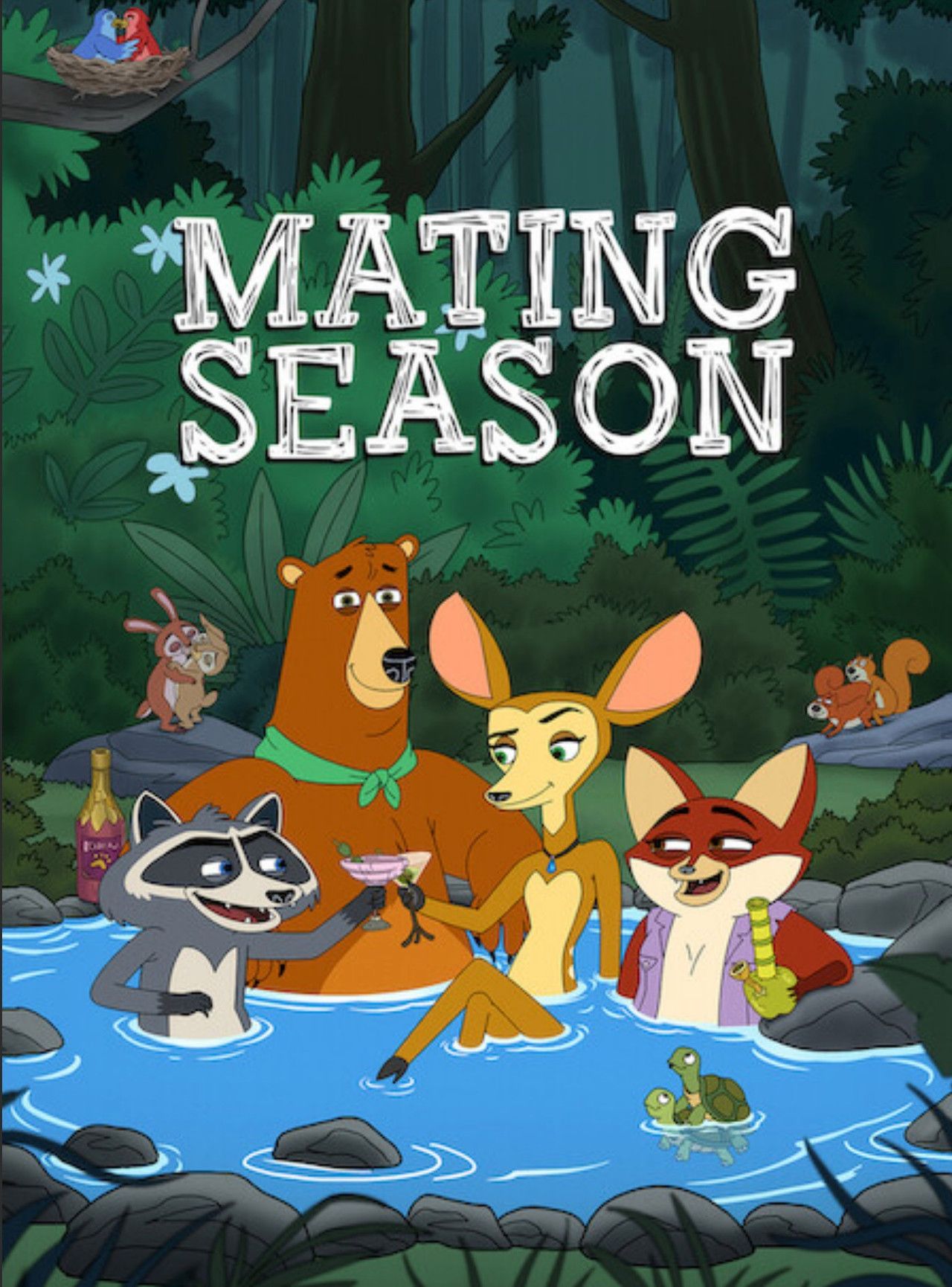

Netflix's animated series, <i>Mating Season</i>, is a global hit, charting in over 20 countries with its raunchy take on...

Cult Classic Reimagined: Zack Snyder to Helm John Carpenter Sci-Fi Remake!

Zack Snyder is reportedly set to direct a remake of John Carpenter's 1981 sci-fi action cult classic, Escape From New Yo...

Global Chart Sensation: BTS's 'Swim' Matches 'Dynamite' Record!

BTS's "Swim" makes history with an eighth week at No. 1 on the Billboard Global Excl. U.S. chart, matching "Dynamite" fo...

Star-Studded Latin Lineup Revealed for Coca-Cola Flow Fest 2026!

Coca-Cola Flow Fest 2026 has announced its star-studded lineup, featuring headliners like Anuel AA, Ozuna, and Kali Uchi...

Former Miss Nigeria Chioma Amadi Masters Design, Inspires Beyond Crown!

The 40th Miss Nigeria, Chioma Amadi, has achieved a Master's degree in Interior Design from America's top design school,...

Tennis Queen Serena Williams Stuns Fans with Epic Comeback Announcement!

Tennis legend Serena Williams has announced her highly anticipated return to professional tennis, nearly four years afte...