AI's Dark Side: State Actors Fueling Iran War Misinformation

The recent conflict involving the bombing of Iran by U.S. and Israeli forces has been significantly complicated by a surge in misrepresented and fabricated videos circulating online.

A prominent example is a widely shared videodepicting a high-rise building in Bahrain on fire, which social media users attributed to an Iranian attack.

However, this video was found to be artificially generated and disseminated by accounts associated with the Iranian government as part of an effort to exaggerate its military successes.

Clues such as malformed cars and a man's elbow seemingly passing through a backpack revealed the video's inauthenticity.

This deluge of inauthentic content is largely fueled by state-linked propaganda and influence campaigns, particularly concerning the war's progress and casualty counts.

Experts note that content from state actors is often well-targeted, using videos to support specific narratives about the conflict and the broader geopolitical situation.

Pro-Iran social media accounts, for instance, have adopted a narrative that inflates destruction and death tolls, a stance consistent with Iranian state media reports.

This strategy has led to numerous AI-generated videos of supposed air strikes, including the aforementioned Bahraini skyscraper incident.

Beyond Iran, a Russia-aligned influence operation named "Operation Overload" (also known as Matryoshka or Storm-1679) has been creating videos impersonating intelligence agencies and news outlets. These videos aim to undermine public safety and sway behavior, a tactic previously employed during election cycles.

One such example included a false warning, attributed to Israeli intelligence, advising Israelis in Germany and the U.S. to exercise extreme caution in public or avoid going outside entirely.

The issue of misinformation is further exacerbated by Iranian censorship, including internet shutdowns, which severely limits the availability of information from the Iranian public.

This absence of diverse perspectives, which could either support or challenge the government's narrative, contrasts sharply with conflicts like the Ukraine war, where robust public messaging played a crucial role in shaping global perception.

Opportunistic social media users, distinct from state actors, have also heavily contributed to the spread of misinformation during the initial stages of the Iran war.

These users have posted old footage from unrelated conflicts, shared video game clips as real events, and generated their own AI content, all in pursuit of clicks and engagement.

The rapid advancement of artificial intelligence, in particular, has enabled new forms of misinformation that were not feasible in past conflicts, even just a few years ago.

When combined with state-linked disinformation and strict censorship, AI creates an even more profound vacuum where the truth becomes increasingly elusive.

The volume of AI-generated content is now "polluting the information environment" in crisis settings to an alarming degree, making it progressively harder to access verified and credible information.

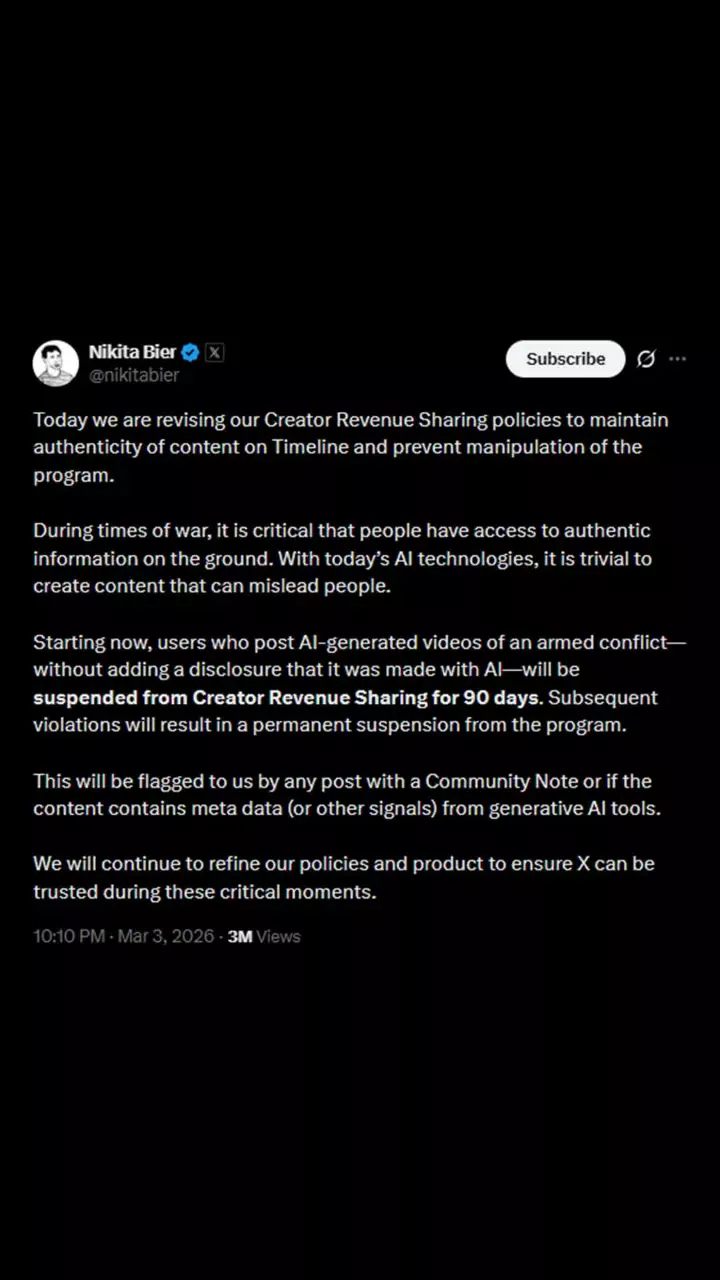

In response to this challenge, X (formerly Twitter) has implemented a policy to suspend users from its revenue-sharing program for 90 days if they post AI-generated content from an armed conflict without proper disclosure, with permanent suspension for repeat offenses.

Experts warn that social media platforms have become frontlines in modern warfare.

Users, even those geographically distant from the conflict, must recognize that these platforms are extensions of the physical battle space, where various actors actively disseminate propaganda and disinformation to manipulate perceptions.

User attention and engagement are considered valuable assets in this digital conflict.

You may also like...

Curry-James Superteam Looms? Blockbuster Warriors Move on the Horizon!

Stephen Curry is reportedly set to meet with LeBron James to discuss a potential blockbuster move to the Golden State Wa...

World Cup Ticket Chaos: FIFA Demands Payment After Glitch Gifts Free Seats!

FIFA is seeking payments from approximately 60 fans who received free 2026 World Cup tickets due to a technical error on...

Breaking: Henry Cavill Lands Explosive Role in Netflix's Spy Thriller

Henry Cavill is set to star in a new untitled Netflix action comedy alongside Kevin Hart, playing rival spies whose live...

Disney Unleashes Moana: Live-Action Remake Incoming!

Ten years after its debut, Disney's Moana is returning to theaters as a live-action remake on July 10, 2026, starring Ca...

Irina's Game-Changer: 'For All Mankind' Character Faces Major 'Star City' Revelation

Apple TV's 'Star City' delves into the secretive world of Soviet cosmonauts, exploring the complex, 'toxic' mentor-mente...

James Marsden's Shocking Turn: 'Your Friends & Neighbors' Teases Explosive Season 3

The Season 2 finale of 'Your Friends & Neighbors' delivers a complex narrative, concluding Owen Ashe's impactful but uns...

Nature's Fury: Winter Storms Shut Down Iconic Western Cape Reserves, Halting Tourism

Due to severe winter weather, including heavy rainfall and flooding, several CapeNature reserves in the Western Cape are...

Sky's the Limit! Edelweiss Forges New Direct Air Link Between Namibia and Europe

Namibia's capital, Windhoek, now boasts direct air connectivity with Zurich, Switzerland, marking a significant boost fo...