Niv-AI Emerges from Stealth with $12M to Tackle AI Data Center Power Inefficiency

Stealth startup Niv-AI has raised $12 million to address a critical bottleneck in artificial intelligence infrastructure: inefficient power usage in data centers. As highlighted by Jensen Huang of Nvidia, AI operations waste significant energy, with GPU utilization often reduced by up to 30% due to unstable power demands, translating into lost revenue and underused capacity.

The issue stems from rapid, unpredictable power spikes generated by large-scale AI workloads, making grid stability increasingly difficult to maintain.

To manage these fluctuations, data centers currently rely on costly energy buffering systems or throttle GPU performance, both of which undermine efficiency and profitability.

Niv-AI aims to solve this by deploying millisecond-level, rack-based sensors to track GPU power consumption in real time, enabling precise mapping of energy usage across different AI tasks.

Backed by multiple venture firms and led by CEO Tomer Timor, the company is building tools to unlock unused compute capacity without compromising grid stability.

Looking ahead, Niv-AI plans to develop an AI-driven “copilot” that predicts and synchronizes power demand across entire data centers, effectively acting as an intelligence layer between infrastructure and the electrical grid.

This approach promises to maximize GPU utilization while creating more stable and efficient energy profiles, a crucial advancement as hyperscalers face growing constraints in scaling data center capacity.

The company expects to begin deployments in select U.S. facilities within the next six to eight months, positioning itself at the intersection of AI growth and energy optimization.

You may also like...

Arteta's Reality Check: Arsenal In 'Different World' Than Bayern, PSG

Mikel Arteta praised the PSG vs. Bayern Champions League semifinal as the best game he's seen, attributing its quality t...

Rooney Drops Age Bomb: Salah and Van Dijk Past Their Prime?

Wayne Rooney asserts that Mohamed Salah and Virgil van Dijk are succumbing to age, impacting their performance at Liverp...

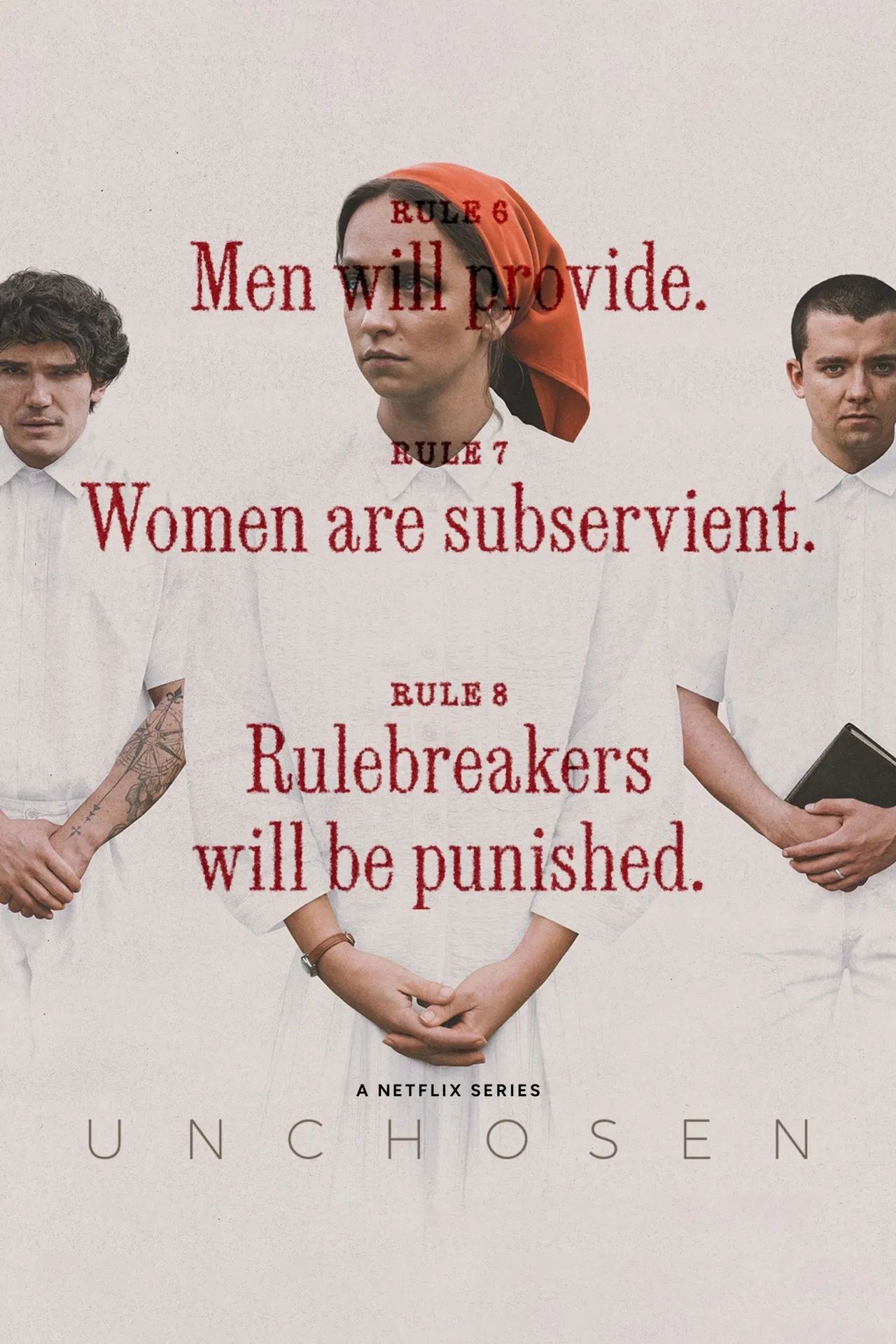

Netflix's Gripping Cult-Inspired Thriller Becomes a Global Phenomenon with 46.9 Million Hours Watched

Netflix's new drama series, "Unchosen," delves into the complex world of religious cults, inspired by real survivor stor...

Coachella Magic: Madonna and Geena Davis Share Sweet Reunion, Decades After Iconic Film

Hollywood legends Geena Davis and Madonna reunited on stage at Coachella during Sabrina Carpenter's set, 34 years after ...

Manilow's Health Battle: Legend Delays Vegas Residency Amidst Cancer Recovery Journey

Barry Manilow has postponed his May Las Vegas residency due to ongoing recovery from lung cancer surgery, but anticipate...

Reacher Fans Rejoice: Netflix Drops New Action Thriller for Perfect Weekend Binge!

Director Steven Caple Jr. discusses his pivotal role in shaping Netflix's <em>Man on Fire</em> Season 1, from establishi...

Ted Lasso Star Teases Exclusive Update for Apple TV's Next Sci-Fi Hit!

Juno Temple, celebrated for her role in Ted Lasso, is starring in a new Apple TV+ sci-fi dramedy, The Husbands. The seri...

Replit CEO Amjad Masad Unpacks Cursor Deal, Apple Rivalry & Stance on Selling

Amjad Masad details Replit's explosive growth to a projected billion-dollar annual run rate, emphasizing its unique valu...