You Can Reduce Your Claude Token Usage by Over 90%

As adoption of AI coding assistants grows, so does a common complaint: token usage disappears faster than expected.

You would always think that more messages equals more usage, but token consumption is less about how often prompts are sent and more about how much information the model must repeatedly process.

Every time Claude responds, it re-reads the conversation context, including previous instructions, code, logs, and corrections.

This means inefficient workflows can quietly burn through tokens without users realising it.

This creates a hidden accumulation effect and understanding this behaviour is the first step toward reducing waste.

1. Keep Sessions Short and Task-Focused

One of the biggest token drains comes from overly long chat threads.

Claude must repeatedly read your growing history, even when much of it is no longer relevant.

For a more efficient workflow; start a new session when switching tasks, clear context once a task is complete and avoid unrelated requests in the same thread

Shorter sessions keep prompts lean and reduce the amount of text Claude must repeatedly process.

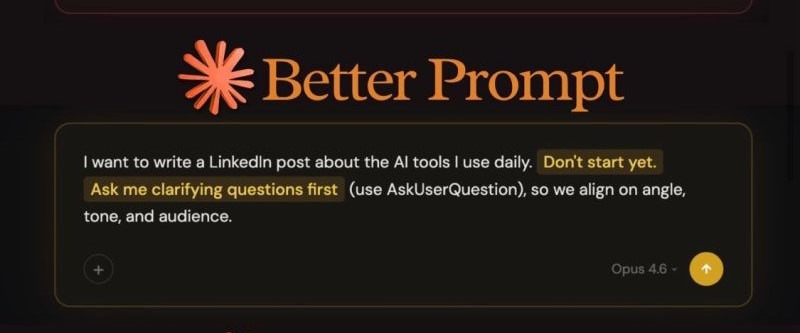

2. Write Better Prompts Instead of Endless Follow-Ups

If you are writing incremental prompts like “Change this slightly”, “Fix that part too”, “Adjust this again”, while convenient, each new follow-up increases token cost because Claude must process the entire conversation again.

"Ambiguous or underspecified prompts conveyed progressively over multiple user turns tend to reduce token efficiency and sometimes performance." – Anthropic Prompting Best Practices

You can use a better approach by sending one well-structured instruction containing all requirements upfront.

3. Batch Tasks Together

Task fragmentation is another hidden cost.

Instead of asking Claude to fix a bug, refactor code or add tests in separate steps, combine them into a single request.

Batching tasks allows the model to process context once and generate a more complete response in one cycle.

4. Share Only Relevant Context

More information does not always produce better results.

Don’t paste entire files, massive logs or duplicate code snippets when only a small section is actually needed.

Claude processes everything provided, whether relevant or not.

Reducing input size directly reduces token usage.

5. Use the Right Model for the Right Task

Not every task requires the most advanced model.

Light tasks such as formatting, rewriting, or small edits can often be handled with lighter or mid-tier models, while more powerful models should be reserved for complex reasoning or architecture-level work.

Using a high-capacity model for simple tasks can result in unnecessary token burn without proportional value.

Matching model strength to task complexity is a practical cost-saving habit.

6. Avoid Correction Loops

Long correction chains are another major source of waste.

Repeatedly revising the same output in a single conversation increases context size and processing cost each time.

When a thread becomes messy, it is often more efficient to restart with a clean prompt outlining the final requirements clearly.

Conclusion

Reducing your claude token usage by over 90% is more about workflow discipline.

If you consistently keep sessions short, batch requests, avoid unnecessary follow-ups, limit pasted context and restart messy threads, you can dramatically reduce token waste while maintaining output quality.

AI tools are powerful, but like any system, efficiency matters.

In Claude’s case, smarter prompting habits may be just as valuable as the model itself.

You may also like...

UCL Semi-Final Shocker: PSG vs Bayern Thriller Sparks Fan Frenzy and Neuer Defense!

Paris Saint-Germain clinched a thrilling 5-4 victory over Bayern Munich in their Champions League semi-final first leg, ...

Lionsgate Unleashes 'John Wick 5' With New Story Details: Get Ready for More Baba Yaga!

Despite the apparent conclusion of his story, John Wick is officially returning for a fifth movie, with Keanu Reeves and...

He-Man Creator Roger Sweet Passes Away at 91, Leaving Behind a Legacy of Masters of the Universe

Roger Sweet, the creator of the iconic He-Man action figure and progenitor of "He-Man and the Masters of the Universe," ...

Legendary Janet Jackson Set for Grand Induction of ‘Rhythm Nation 1814’ into Grammy Hall of Fame!

Janet Jackson's <i>Rhythm Nation 1814</i> will be inducted into the Grammy Hall of Fame at a star-studded gala celebrati...

Rolling Stones Spark Global Frenzy with Mysterious ‘Foreign Tongues’ Project Tease!

The Rolling Stones are teasing a new project titled "Foreign Tongues" with a cryptic global campaign, following their Gr...

Daredevil Star Michael Gandolfini Hints at Major Daniel Twist for Season 3

Michael Gandolfini discusses the tragic and impactful death of his character, Daniel Blake, in Daredevil: Born Again Sea...

Asake Drops Epic Tracklist for 'M$NEY' Album Featuring DJ Snake & Kabza De Small!

Asake has officially announced his new album, "M$NEY," set for release on August 7, 2026. Revealed through a cinematic t...

Juma Jux & Priscilla Dazzle UK Premiere in Iconic Couple Styles!

Juma Jux and Priscilla Ojo showcased impeccable coordinated style at the UK premiere of Iyabo Ojo's film "The Return of ...