OpenAI's New Watchdog: Zico Kolter Leads Powerful Safety Panel with Veto Power on AI Releases

Zico Kolter, a distinguished professor at Carnegie Mellon University, currently holds one of the most critical positions in the burgeoning artificial intelligence industry, particularly within OpenAI. He chairs a specialized four-person Safety and Security Committee at the ChatGPT maker, endowed with the significant authority to halt the release of new AI systems should they be deemed unsafe. This critical oversight extends to a broad spectrum of potential dangers, ranging from the hypothetical use of powerful AI by malicious actors to create weapons of mass destruction, to the more immediate concern of poorly designed chatbots negatively impacting users' mental health. Kolter emphasized in an interview that the committee's scope is not limited to existential threats but encompasses "the entire swath of safety and security issues and critical topics that come up when we start talking about these very widely used AI systems."

While Kolter, a computer scientist, was appointed to lead this committee over a year ago, its importance significantly escalated following agreements last week with California and Delaware regulators. These agreements positioned Kolter's oversight as a cornerstone for allowing OpenAI to establish a new business structure, facilitating capital raising and profit generation. Safety has been a fundamental tenet of OpenAI's mission since its inception a decade ago as a nonprofit research laboratory, dedicated to developing AI that benefits humanity. However, the commercial boom sparked by ChatGPT's release led to accusations that the company prioritized market speed over safety, a concern amplified by internal strife, including the temporary ouster of CEO Sam Altman in 2023, which brought these mission-deviation worries into public view. OpenAI, based in San Francisco, also faced pushback, notably a lawsuit from co-founder Elon Musk, as it transitioned towards a more traditional for-profit model to advance its technology.

The formal commitments outlined in the agreements with California Attorney General Rob Bonta and Delaware Attorney General Kathy Jennings underscore a promise to prioritize safety and security decisions over financial considerations as OpenAI forms a new public benefit corporation, technically governed by its nonprofit OpenAI Foundation. Kolter will serve on the nonprofit's board, not the for-profit one, but is granted "full observation rights" to attend all for-profit board meetings and access information pertinent to AI safety decisions. Bonta's memorandum of understanding specifically names Kolter as the only individual other than Bonta himself. Kolter confirmed that these agreements largely reinforce the existing authorities of his safety committee, which was established last year. The other three members of the committee also sit on the OpenAI board, including former U.S. Army General Paul Nakasone, a former commander of the U.S. Cyber Command. Sam Altman had stepped down from the safety panel last year to enhance its perceived independence. Kolter affirmed the committee's power: "We have the ability to do things like request delays of model releases until certain mitigations are met," though he declined to confirm if this power had ever been exercised, citing confidentiality.

Looking ahead, Kolter anticipates a diverse range of AI agent concerns that the committee will address. These include cybersecurity risks, such as an AI agent accidentally exfiltrating data after encountering malicious text online, and security issues surrounding AI model weights. He also highlighted emerging and novel concerns specific to advanced AI models that lack traditional security parallels, such as whether these models could empower malicious users to develop bioweapons or execute more sophisticated cyberattacks. Furthermore, the committee is deeply focused on the direct impact of AI models on individuals, including effects on mental health and the consequences of human-AI interactions. This latter concern gained stark relevance with a wrongful-death lawsuit against OpenAI from California parents whose teenage son reportedly took his own life after extensive interactions with ChatGPT.

Kolter, who directs Carnegie Mellon’s machine learning department, began his academic journey in AI in the early 2000s as a Georgetown University freshman, long before the field gained widespread prominence. He recalled, "When I started working in machine learning, this was an esoteric, niche area. We called it machine learning because no one wanted to use the term AI because AI was this old-time field that had overpromised and underdelivered." Kolter, now 42, has closely followed OpenAI since its inception, even attending its launch party at an AI conference in 2015. Despite his deep involvement, he admits that "very few people, even people working in machine learning deeply, really anticipated the current state we are in, the explosion of capabilities, the explosion of risks that are emerging right now."

The AI safety community is closely monitoring OpenAI's restructuring and Kolter’s work. Nathan Calvin, general counsel at the AI policy nonprofit Encode and a notable critic of OpenAI, expressed "cautious optimism," particularly if Kolter's group receives adequate staffing and plays a truly robust role. Calvin, who believes Kolter possesses the right background for the role, stated, "I think he has the sort of background that makes sense for this role. He seems like a good choice to be running this." He also emphasized the importance of OpenAI adhering to its founding mission. Calvin cautioned that while these new commitments "could be a really big deal if the board members take them seriously," they could also merely be "words on paper and pretty divorced from anything that actually happens," acknowledging that the true impact remains to be seen.

You may also like...

NBA Playoffs Electrify: Thunder Dominate Spurs in Game 3 Thriller!

The Oklahoma City Thunder defeated the San Antonio Spurs 123-108 in Game 3 of the Western Conference finals, taking a 2-...

Premier League Shocker: Bruno Fernandes Crowned Player of the Season!

Bruno Fernandes has been named the Premier League Player of the Season, an award he secures for the first time while equ...

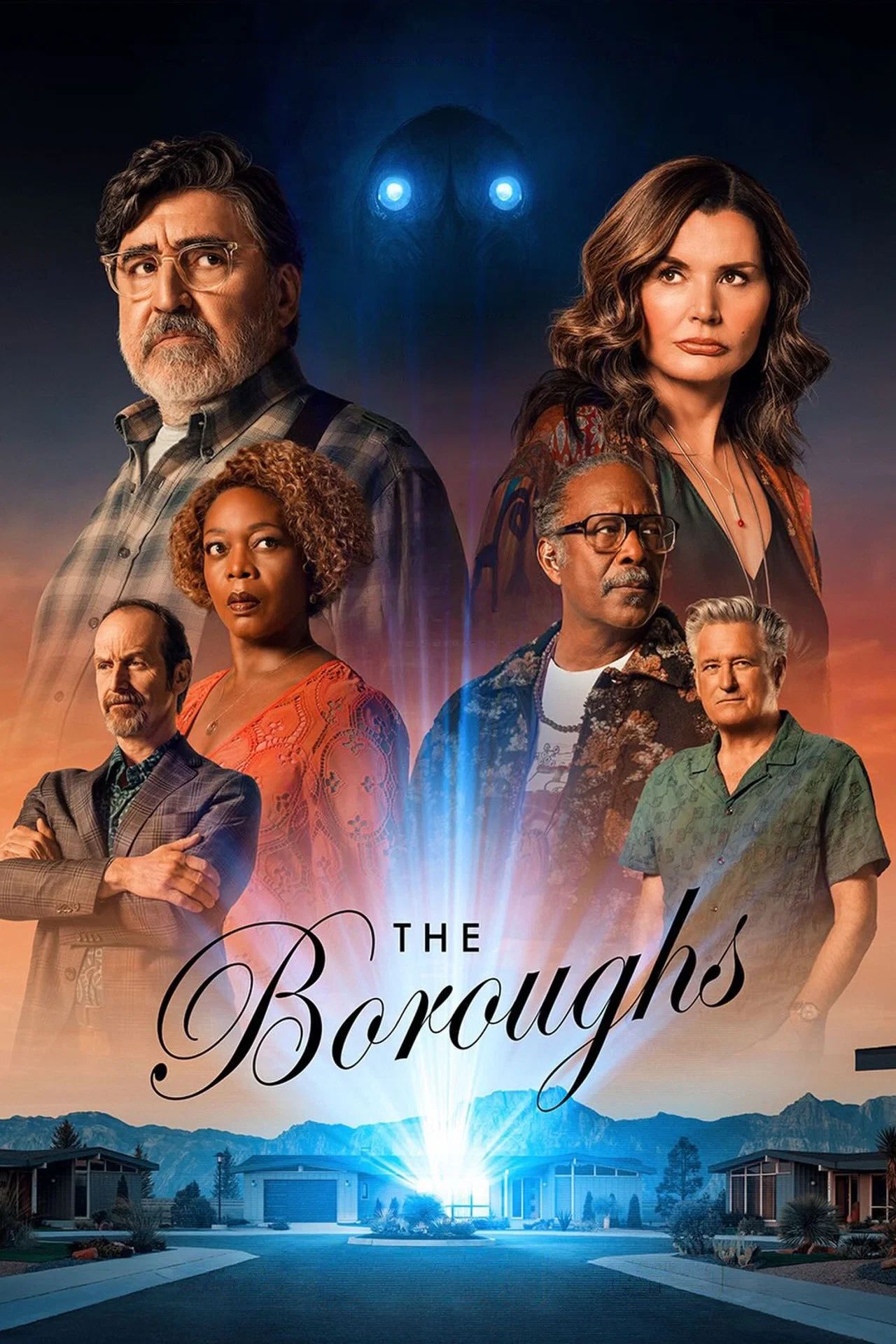

Netflix Unleashes Global Sci-Fi Phenomenon, Hailed as Next 'Stranger Things'

Netflix's new sci-fi series "The Boroughs," executive-produced by the Duffer Brothers, has soared to the top of viewersh...

Cannes Market Frenzy: Netflix and Mubi Battle for Hot Titles

The Cannes Film Market buzzes with major acquisitions as Netflix secures two high-profile films, "La Bola Negra" and "Ge...

ASIAN KUNG-FU GENERATION Rocks 30th Anniversary With Brand New EPs!

ASIAN KUNG-FU GENERATION recently released their 'Fujieda EP' and single 'Skins,' recorded at the unique MUSIC inn Fujie...

Post Malone Unleashes Epic Australian & New Zealand Stadium Tour!

Post Malone is bringing his "Big Ass World Tour" to Australia and New Zealand this October for his largest headline show...

US Imposes Sanctions on Tanzanian Police Over Activist Torture Claims

The United States has sanctioned senior Tanzanian police official Faustine Jackson Mafwele for gross human rights violat...

Ebola Threat Surges in Eastern DR Congo as UN Ramps Up Response

The UN is accelerating its response to a rapidly escalating Ebola outbreak in eastern DRC, where conflict and deep mistr...