OpenAI Unveils Next-Gen GPT-5.4 with Pro and Thinking Capabilities

On Thursday, OpenAI officially released GPT-5.4, presenting it as their most capable and efficient frontier model specifically designed for professional work. This new foundation model is available in a standard version, alongside two specialized variants: GPT-5.4 Thinking, optimized for reasoning, and GPT-5.4 Pro, geared for high performance.

A significant advancement in GPT-5.4 is its API version, which now supports context windows as large as 1 million tokens, marking the largest context window ever offered by OpenAI. Furthermore, OpenAI has highlighted improved token efficiency, stating that GPT-5.4 can resolve the same problems with considerably fewer tokens compared to its predecessor, GPT-5.2.

The model's superior capabilities are underscored by significantly improved benchmark results. GPT-5.4 achieved record scores in the computer use benchmarks OSWorld-Verified and WebArena Verified. It also scored an impressive 83% on OpenAI’s GDPval test, which evaluates knowledge work tasks. In the realm of professional skills, including law and finance, GPT-5.4 took the lead on Mercor’s APEX-Agents benchmark. Brendan Foody, CEO of Mercor, emphasized GPT-5.4's excellence in creating "long-horizon deliverables such as slide decks, financial models, and legal analysis," noting its top performance at a faster speed and lower cost than competing models.

OpenAI has continued its focus on mitigating hallucinations and factual errors. The new model demonstrates a substantial improvement, being 33% less likely to make errors in individual claims and showing an 18% overall reduction in response errors when compared to GPT-5.2. This signifies a considerable leap in the model's reliability and factual accuracy.

The launch of GPT-5.4 also introduces a revamped approach to tool calling within its API version, featuring a new system called Tool Search. Previously, system prompts would define all available tools, a process that could consume a large number of tokens as the toolset grew. The new Tool Search system enables models to look up tool definitions only when required, leading to faster and more cost-effective requests, particularly in complex systems with numerous available tools.

In the domain of AI safety, OpenAI has incorporated a new evaluation to scrutinize its models’ chain-of-thought—the internal commentary that reveals their reasoning process through multi-step tasks. AI safety researchers have long expressed concerns about reasoning models potentially misrepresenting their chain-of-thought. OpenAI’s new evaluation indicates that deception is less likely to occur in the GPT-5.4 Thinking version, suggesting that "the model lacks the ability to hide its reasoning and that CoT monitoring remains an effective safety tool." This reinforces the transparency and safety measures integrated into the new model.

You may also like...

Curry-James Superteam Looms? Blockbuster Warriors Move on the Horizon!

Stephen Curry is reportedly set to meet with LeBron James to discuss a potential blockbuster move to the Golden State Wa...

World Cup Ticket Chaos: FIFA Demands Payment After Glitch Gifts Free Seats!

FIFA is seeking payments from approximately 60 fans who received free 2026 World Cup tickets due to a technical error on...

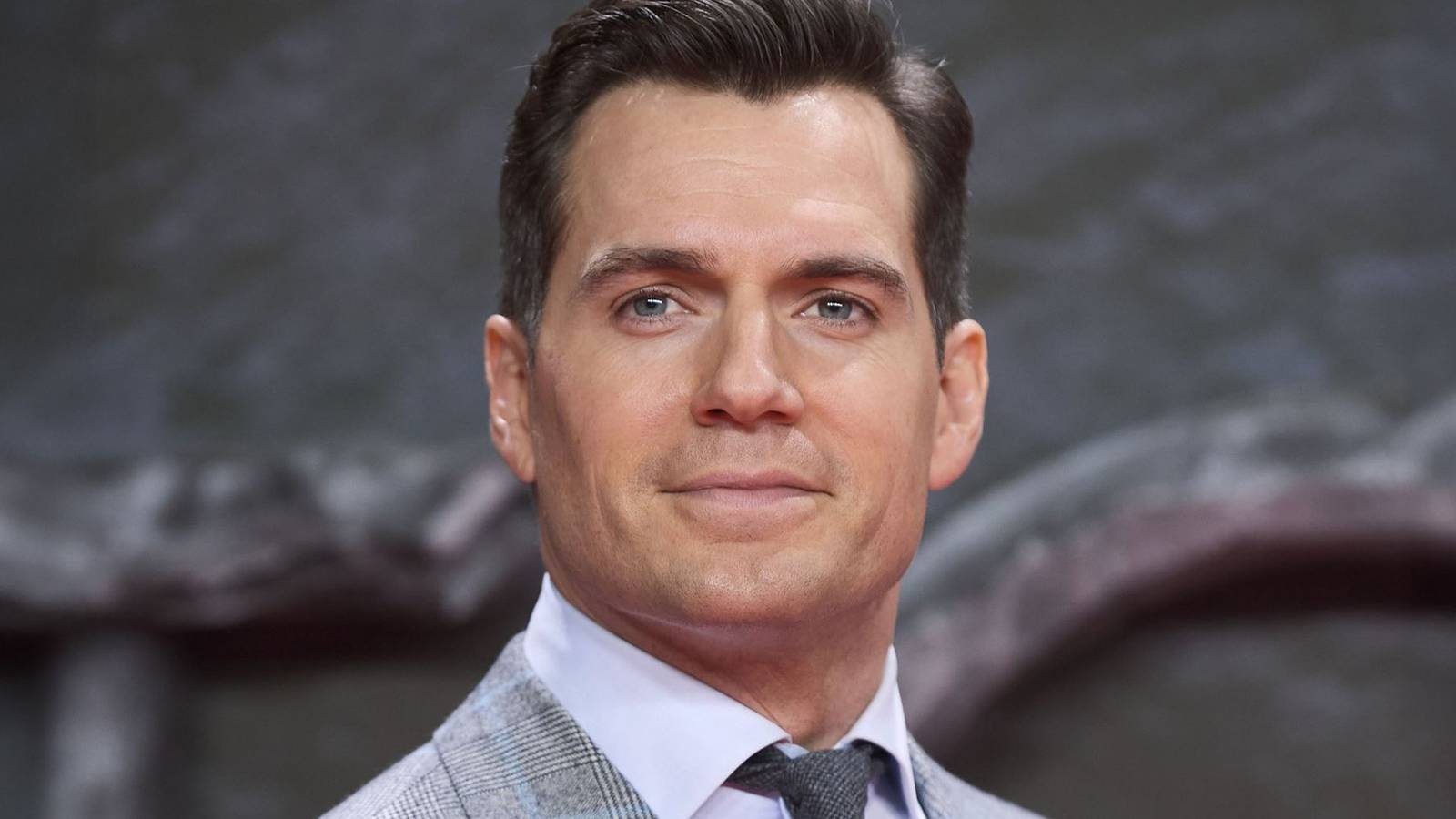

Breaking: Henry Cavill Lands Explosive Role in Netflix's Spy Thriller

Henry Cavill is set to star in a new untitled Netflix action comedy alongside Kevin Hart, playing rival spies whose live...

Disney Unleashes Moana: Live-Action Remake Incoming!

Ten years after its debut, Disney's Moana is returning to theaters as a live-action remake on July 10, 2026, starring Ca...

Irina's Game-Changer: 'For All Mankind' Character Faces Major 'Star City' Revelation

Apple TV's 'Star City' delves into the secretive world of Soviet cosmonauts, exploring the complex, 'toxic' mentor-mente...

James Marsden's Shocking Turn: 'Your Friends & Neighbors' Teases Explosive Season 3

The Season 2 finale of 'Your Friends & Neighbors' delivers a complex narrative, concluding Owen Ashe's impactful but uns...

Nature's Fury: Winter Storms Shut Down Iconic Western Cape Reserves, Halting Tourism

Due to severe winter weather, including heavy rainfall and flooding, several CapeNature reserves in the Western Cape are...

Sky's the Limit! Edelweiss Forges New Direct Air Link Between Namibia and Europe

Namibia's capital, Windhoek, now boasts direct air connectivity with Zurich, Switzerland, marking a significant boost fo...