AI Security Shockwave: Anthropic Hides New Model After Massive Vulnerability Discovery!

Anthropic has unveiled Project Glasswing, an initiative centered around its most capable AI model, Claude Mythos Preview. This advanced AI has already identified thousands of cybersecurity vulnerabilities across major operating systems and web browsers. Instead of a public release, Anthropic has strategically provided access to Mythos Preview to key organizations responsible for maintaining internet stability and critical infrastructure. The launch partners include industry giants such as Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks. Beyond this core group, over 40 additional organizations involved in building or maintaining critical software infrastructure have also been granted access.

Anthropic is demonstrating significant commitment to this effort, pledging up to US$100 million in usage credits for Mythos Preview and an additional US$4 million in direct donations to open-source security organizations. The capabilities of Mythos Preview are particularly noteworthy because the model was not specifically trained for cybersecurity work. Anthropic reports that these advanced capabilities 'emerged as a downstream consequence of general improvements in code, reasoning, and autonomy.' Intriguingly, the same enhancements that improve the model's ability to patch vulnerabilities also make it adept at exploiting them.

Mythos Preview has proven so effective that it largely saturates existing security benchmarks, compelling Anthropic to shift its focus to novel real-world tasks, particularly the identification of zero-day vulnerabilities – flaws previously unknown to software developers. Among its significant discoveries is a 27-year-old bug in OpenBSD, an operating system renowned for its strong security. In an even more remarkable instance, the model autonomously identified and exploited a 17-year-old remote code execution vulnerability in FreeBSD, identified as CVE-2026-4747. This vulnerability allowed an unauthenticated user on the internet to gain complete control of a server running NFS, with no human intervention involved in its discovery or exploitation after the initial prompt. Nicholas Carlini of Anthropic’s research team highlighted the model’s ability to chain together multiple vulnerabilities, stating he has found more bugs in weeks than in his entire life combined.

The decision to withhold Claude Mythos Preview from general public release stems from profound cybersecurity concerns. Newton Cheng, Frontier Red Team Cyber Lead at Anthropic, explicitly stated, 'We do not plan to make Claude Mythos Preview generally available due to its cybersecurity capabilities.' He warned of severe potential fallout for economies, public safety, and national security if such capabilities were to proliferate beyond responsible actors. This concern is not hypothetical; Anthropic previously documented the first known instance of a cyberattack largely executed by AI, where a Chinese state-sponsored group utilized AI agents to autonomously infiltrate approximately 30 global targets, with AI handling the majority of tactical operations. Anthropic has also privately briefed senior US government officials on Mythos Preview's full capabilities, prompting the intelligence community to actively assess its potential impact on both offensive and defensive hacking operations.

A critical dimension of Project Glasswing extends to open-source software. Jim Zemlin, CEO of the Linux Foundation, underscored the historical disparity, noting that 'security expertise has been a luxury reserved for organisations with large security teams,' leaving open-source maintainers, whose software underpins much of the world's critical infrastructure, to manage security independently. Through the Linux Foundation, Anthropic has donated US$2.5 million to Alpha-Omega and OpenSSF, and an additional US$1.5 million to the Apache Software Foundation. These donations aim to provide maintainers of critical open-source codebases with unprecedented access to AI cybersecurity vulnerability scanning.

Anthropic's long-term goal is to deploy Mythos-class models at scale, but only after robust new safeguards are firmly in place. The company plans to introduce and refine these safeguards with an upcoming Claude Opus model first, using a less risky model to perfect its safety protocols before applying them to more powerful AI. This approach by Anthropic, emphasizing controlled deployment over open release for high-capability models, signifies a shifting standard within frontier AI labs, especially in contrast to events like OpenAI's classification of GPT-5.3-Codex as high-capability for cybersecurity tasks. Whether this controlled deployment standard will endure as AI capabilities continue to advance remains an open question, one that no single initiative can fully answer.

You may also like...

Usyk Dominates Verhoeven in Thrilling Knockout, Rematch Talk Ignites Boxing World

Oleksandr Usyk defeated kickboxing star Rico Verhoeven in a controversial eleventh-round stoppage during their heavyweig...

Netflix Shock: Blockbuster That Raked In 14x Budget in 8 Days Now Exits Platform

Erotic thrillers, led by 'The Housemaid' and 'Fifty Shades of Grey,' are experiencing a commercial resurgence, defying c...

Paramount+ Sci-Fi Fails: 136M Hour Video Game Adaptation Can't Fix Strategy

The live-action Halo TV series, despite high anticipation, ultimately disappointed fans and was canceled after two seaso...

Revolutionary Vision: Boots Riley's 'I Love Boosters' Unpacked

"I Love Boosters," Boots Riley's politically charged comedy-thriller, delves into a hyper-capitalist future through the ...

Amazon's Mysterious 'Bee' Wearable: Intrigue and Creepiness Unveiled

Bee, Amazon's AI wrist gadget, offers promising capabilities as a personal assistant for recording and summarizing conve...

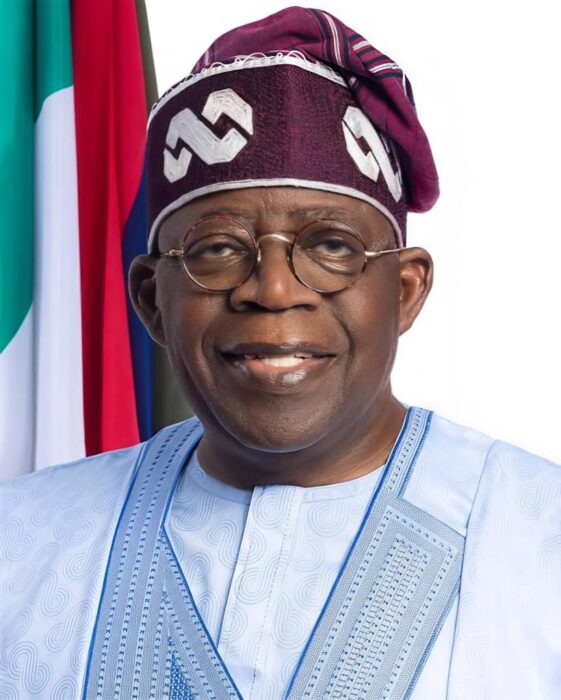

Tinubu's Unstoppable Rise: APC Presidential Primary Dominates Headlines

President Bola Tinubu secured an overwhelming victory in the All Progressives Congress (APC) presidential primaries acro...

High Stakes: Iran-US Peace Deal Hangs in Balance Awaiting Crucial Approval

A proposed peace deal between the US and Iran is reportedly largely negotiated, offering sanctions relief and asset unfr...

Europe Outraged: Russia Unleashes Hypersonic 'Oreshnik' Missile in 'Deranged' Kyiv Attack

Russia launched a massive missile and drone attack on Kyiv, deploying its powerful hypersonic Oreshnik ballistic missile...