AI Ethics Showdown: Anthropic's 'No Weapons' Stance Impresses UK Regulators

The story of Anthropic's potential UK expansion is deeply intertwined with a high-stakes standoff between the AI company and the United States government over the ethical deployment of artificial intelligence. In late February, US Defence Secretary Pete Hegseth reportedly issued an ultimatum to Anthropic CEO Dario Amodei: remove guardrails preventing Claude from being used for fully autonomous weapons and domestic mass surveillance, or face severe repercussions. Amodei, citing moral conscience, steadfastly refused, asserting that some AI applications could "undermine rather than defend democratic values."

Washington's response was swift and decisive. The Trump administration directed all federal agencies to immediately cease using Anthropic's technology. Furthermore, the Pentagon designated Anthropic a supply chain risk, a label typically reserved for adversarial foreign entities, and consequently revoked a substantial US$200 million contract. This action prompted defence technology companies to instruct their employees to abandon Claude in favor of alternative AI solutions.

Amidst this unfolding drama, London observed the situation through a different lens, perceiving a significant opportunity. Staff within the UK’s Department for Science, Innovation and Technology (DSIT), with the backing of Prime Minister Keir Starmer’s office, have developed comprehensive proposals to court Anthropic. These plans, slated to be presented to Amodei during his late May visit, include the possibility of a dual stock listing on the London Stock Exchange and a significant office expansion in the capital.

Anthropic already boasts a substantial presence in Britain, with approximately 200 employees, and notably appointed former prime minister Rishi Sunak as a senior adviser last year, indicating that the foundational infrastructure for a meaningful UK operation is already well-established. Crucially, the British government's pitch signals an explicit endorsement: Anthropic’s principled approach to AI, built upon embedded ethical constraints, is viewed as an asset rather than an impediment.

The dispute in the US has also seen legal challenges, with Anthropic's lawyers arguing in court that Claude was not designed for lethal autonomous weapons without human oversight or for spying on US citizens, deeming such uses an abuse of its technology. US District Judge Rita Lin granted a preliminary injunction blocking the blacklist in March, finding the government’s actions "troubling" and likely in violation of the law. This judicial finding holds particular relevance in the UK context, where Britain is strategically positioning its regulatory environment. The UK aims to offer a less constrained environment for AI companies, striking a balance between Washington’s demand for unrestricted military access and Brussels’ more stringent EU AI Act, without requiring Anthropic to abandon its defended guardrails.

This courtship is part of broader UK efforts to enhance its domestic AI capabilities, exemplified by a recently announced £40 million state-backed research lab. This initiative addresses the acknowledged absence of homegrown competitors to leading US frontier AI labs. The competition for AI talent and investment in London is already intense, with OpenAI committed to making London its largest research hub outside the US, and Google having anchored its DeepMind operations in King’s Cross since 2014. Given its current circumstances, Anthropic represents a particularly consequential target in this competitive landscape.

Independently of its domestic legal battles, Anthropic has been pursuing international expansion, including the opening of a Sydney office as its fourth Asia-Pacific location, signaling an active global growth strategy. The extent to which London will benefit from this ongoing expansion remains to be seen. The unfolding scenario presents a remarkable dynamic: a company blacklisted by one G7 government for its AI ethics policy is now being actively courted by another G7 government precisely because of that very policy. The outcomes of the late May meetings with Amodei are eagerly anticipated.

You may also like...

NBA Playoffs Electrify: Thunder Dominate Spurs in Game 3 Thriller!

The Oklahoma City Thunder defeated the San Antonio Spurs 123-108 in Game 3 of the Western Conference finals, taking a 2-...

Premier League Shocker: Bruno Fernandes Crowned Player of the Season!

Bruno Fernandes has been named the Premier League Player of the Season, an award he secures for the first time while equ...

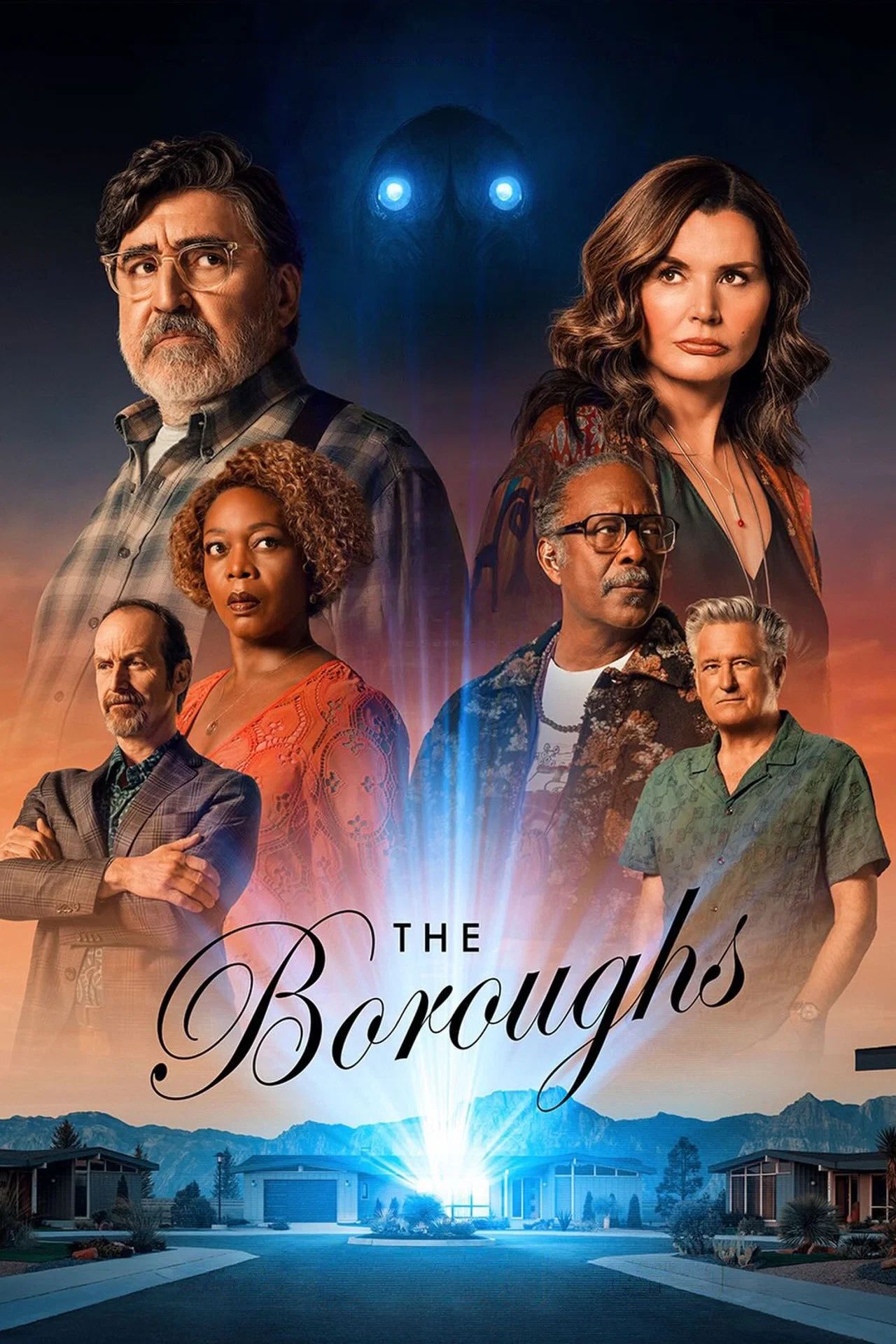

Netflix Unleashes Global Sci-Fi Phenomenon, Hailed as Next 'Stranger Things'

Netflix's new sci-fi series "The Boroughs," executive-produced by the Duffer Brothers, has soared to the top of viewersh...

Cannes Market Frenzy: Netflix and Mubi Battle for Hot Titles

The Cannes Film Market buzzes with major acquisitions as Netflix secures two high-profile films, "La Bola Negra" and "Ge...

ASIAN KUNG-FU GENERATION Rocks 30th Anniversary With Brand New EPs!

ASIAN KUNG-FU GENERATION recently released their 'Fujieda EP' and single 'Skins,' recorded at the unique MUSIC inn Fujie...

Post Malone Unleashes Epic Australian & New Zealand Stadium Tour!

Post Malone is bringing his "Big Ass World Tour" to Australia and New Zealand this October for his largest headline show...

US Imposes Sanctions on Tanzanian Police Over Activist Torture Claims

The United States has sanctioned senior Tanzanian police official Faustine Jackson Mafwele for gross human rights violat...

Ebola Threat Surges in Eastern DR Congo as UN Ramps Up Response

The UN is accelerating its response to a rapidly escalating Ebola outbreak in eastern DRC, where conflict and deep mistr...