AI Shake-Up: Mistral Unleashes New Models, Threatens Reign of Tech Giants

French AI startup Mistral has unveiled its new Mistral 3 family of open-weight models, a strategic launch aimed at asserting its leadership in making artificial intelligence publicly available and serving business clients more effectively than its Big Tech rivals. This comprehensive release comprises ten distinct models: a powerful large frontier model endowed with multimodal and multilingual capabilities, alongside nine smaller, offline-capable, and fully customizable models designed for specialized applications.

The introduction of the Mistral 3 family underscores Mistral's commitment to the open-weight paradigm, where model weights are publicly released, allowing anyone to download and operate them. This approach stands in stark contrast to closed-source models, such as OpenAI's ChatGPT, which maintain proprietary weights and offer access solely through APIs or controlled interfaces. While Mistral, a two-year-old company founded by former DeepMind and Meta researchers, has raised significantly less capital than industry giants like OpenAI and Anthropic, it is actively working to demonstrate that sheer scale does not always equate to superior performance, particularly for enterprise applications.

Guillaume Lample, co-founder and chief scientist at Mistral, articulated this philosophy, stating, "Our customers are sometimes happy to start with a very large [closed] model that they don’t have to fine-tune … but when they deploy it, they realize it’s expensive, it’s slow. Then they come to us to fine-tune small models to handle the use case [more efficiently]." He further emphasized that the vast majority of enterprise use cases can be effectively tackled by smaller models, especially when they are fine-tuned. Lample also cautioned that initial benchmark comparisons, which might place Mistral's smaller models behind closed-source competitors, can be misleading, as significant gains in performance and efficiency are realized through customization, often allowing them to match or even outperform larger proprietary models.

The flagship of this new family is Mistral Large 3, an open frontier model that now rivals important capabilities found in larger closed-source AI models like OpenAI’s GPT-4o and Google’s Gemini 2. It also competes fiercely with other open-weight alternatives. Notably, Large 3 is among the first open frontier models to integrate multimodal and multilingual capabilities into a single architecture, placing it on par with Meta’s Llama 3 and Alibaba’s Qwen3-Omni. This advancement moves beyond previous strategies, such as Mistral's own Pixtral and Mistral Small 3.1, which paired large language models with separate multimodal components. Architecturally, Large 3 features a "granular Mixture of Experts" design with 41 billion active and 675 billion total parameters, facilitating efficient reasoning across a substantial 256,000 context window. This blend of speed and capability makes it highly suitable for diverse applications, including extensive document analysis, complex coding, content creation, sophisticated AI assistants, and workflow automation.

Accompanying the frontier model is the Ministral 3 lineup, a collection of smaller models posited as not merely sufficient but superior for many tasks. This family includes nine distinct, high-performance dense models spanning three sizes (14 billion, 8 billion, and 3 billion parameters) and three specialized variants: Base (the foundational pre-trained model), Instruct (optimized for conversational and assistant-style workflows), and Reasoning (tailored for complex logic and analytical challenges). Mistral asserts that this diverse range empowers developers and businesses to precisely align models with their specific requirements, whether prioritizing raw performance, cost efficiency, or niche capabilities. The company claims Ministral 3 models achieve comparable or superior scores to other open-weight leaders while demonstrating greater efficiency and generating fewer tokens for equivalent tasks. All variants boast vision support, manage expansive 128,000-256,000 context windows, and operate across multiple languages.

A cornerstone of Mistral's offering is practicality and accessibility. Lample highlighted that Ministral 3 can operate on a single GPU, enabling deployment on affordable hardware, from on-premise servers and laptops to robots and other edge devices with limited or no connectivity. This capability is vital for enterprises needing to retain data in-house, students requiring offline feedback, or robotics teams operating in remote environments. Lample emphasized that enhanced efficiency directly translates to broader accessibility, aligning with Mistral's mission to ensure AI is available to everyone, including those without internet access, thereby preventing its control by a select few "big labs."

This drive for accessibility is also fueling Mistral's expanding focus on physical AI. The company has initiated efforts to integrate its smaller models into robots, drones, and vehicles. Key collaborations include working with Singapore’s Home Team Science and Technology Agency (HTX) on specialized models for robotics, cybersecurity systems, and fire safety; partnering with German defense tech startup Helsing for vision-language-action models for drones; and collaborating with automaker Stellantis on an in-car AI assistant. For Mistral, reliability and independence are considered as crucial as raw performance, with Lample noting that reliance on external APIs with unpredictable downtime is unacceptable for large companies.

Recommended Articles

xAI’s Massive Anthropic Deal Sparks Questions About Its AI Future

Anthropic has acquired all compute capacity at xAI's Colossus 1 data center, signaling a strategic shift for xAI towards...

AI Startup QuTwo Rockets to $380M Valuation in Angel Round Led by Peter Sarlin

Finnish AI lab QuTwo, founded by Peter Sarlin, has secured a €25 million angel round, valuing it at €325 million. Focusi...

OpenAI Faces Strategic Crossroads Amid Acquisitions, Image Struggles, and Rising AI Competition

OpenAI's recent acquisitions of personal finance startup Hiro and media company TBPN are strategic moves aimed at solvin...

OpenAI Leadership Shake-Up as Key Executives Exit Amid Strategic Pivot Away from ‘Side Projects’

OpenAI is undergoing a significant leadership shift with key architects Kevin Weil and Bill Peebles, along with CTO Srin...

Microsoft Rolls Out Groundbreaking Open-Source AI Security Toolkit

Microsoft has unveiled an open-source toolkit for runtime security, designed to impose strict governance on enterprise A...

Sora's Potential Shutdown Sparks Reality Check for AI Video Future

OpenAI has ceased operations for its Sora app and video models, a move seen as a strategic pivot towards enterprise tool...

You may also like...

Usyk Dominates Verhoeven in Thrilling Knockout, Rematch Talk Ignites Boxing World

Oleksandr Usyk defeated kickboxing star Rico Verhoeven in a controversial eleventh-round stoppage during their heavyweig...

Netflix Shock: Blockbuster That Raked In 14x Budget in 8 Days Now Exits Platform

Erotic thrillers, led by 'The Housemaid' and 'Fifty Shades of Grey,' are experiencing a commercial resurgence, defying c...

Paramount+ Sci-Fi Fails: 136M Hour Video Game Adaptation Can't Fix Strategy

The live-action Halo TV series, despite high anticipation, ultimately disappointed fans and was canceled after two seaso...

Revolutionary Vision: Boots Riley's 'I Love Boosters' Unpacked

"I Love Boosters," Boots Riley's politically charged comedy-thriller, delves into a hyper-capitalist future through the ...

Amazon's Mysterious 'Bee' Wearable: Intrigue and Creepiness Unveiled

Bee, Amazon's AI wrist gadget, offers promising capabilities as a personal assistant for recording and summarizing conve...

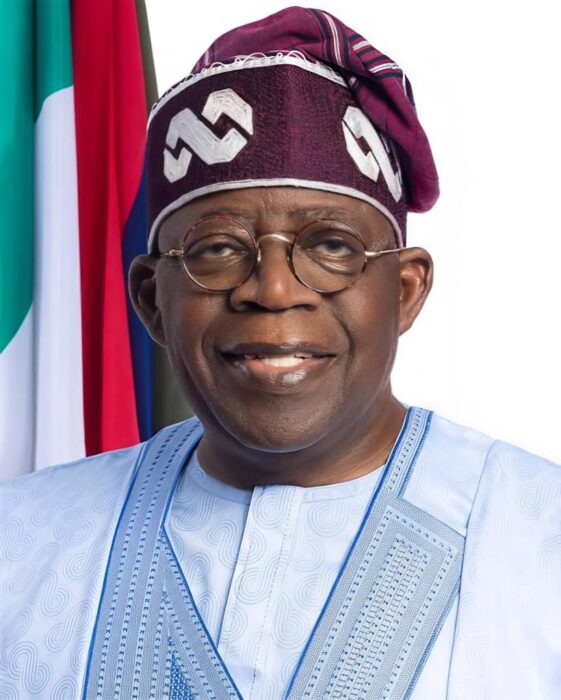

Tinubu's Unstoppable Rise: APC Presidential Primary Dominates Headlines

President Bola Tinubu secured an overwhelming victory in the All Progressives Congress (APC) presidential primaries acro...

High Stakes: Iran-US Peace Deal Hangs in Balance Awaiting Crucial Approval

A proposed peace deal between the US and Iran is reportedly largely negotiated, offering sanctions relief and asset unfr...

Europe Outraged: Russia Unleashes Hypersonic 'Oreshnik' Missile in 'Deranged' Kyiv Attack

Russia launched a massive missile and drone attack on Kyiv, deploying its powerful hypersonic Oreshnik ballistic missile...